CopilotKit raises Series ASeries A, read the announcement announced

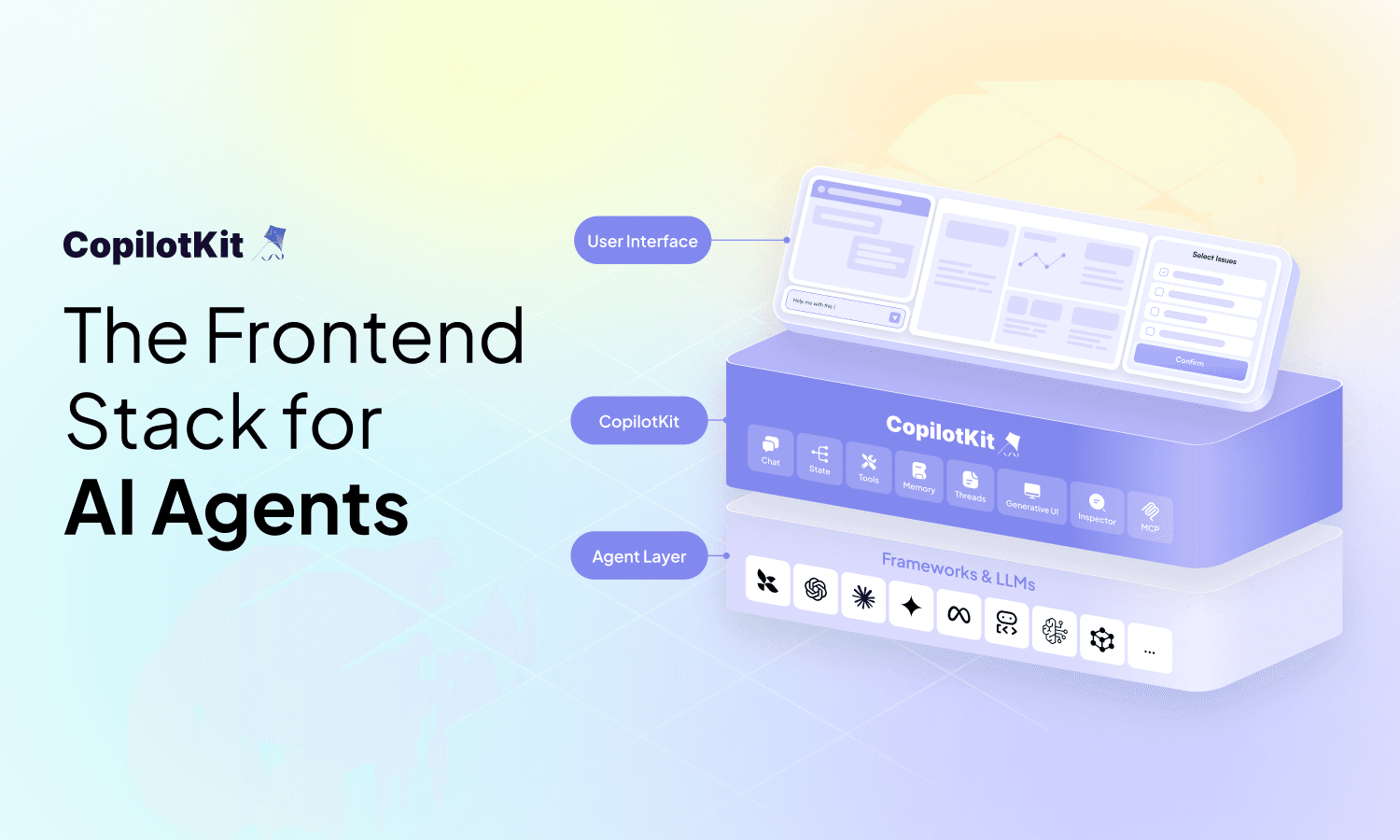

Today tech giants are investing big in embedded AI copilots - intelligent virtual assistants for their products. Developers looking to integrate similar assistants have to either use inflexible ‘low-code’ solutions or build everything from scratch.

That’s why we built CopilotKit: for any developer to quickly integrate custom AI copilot experiences into products, with the full power of the latest AI tooling behind it.

CopilotKit v1.0 brings significant improvements in user& developer performance and experience, plus the latest in the CopilotKit Cloud beta.

Let’s dive in to what’s included in the v1.0 release or watch the video walkthrough here

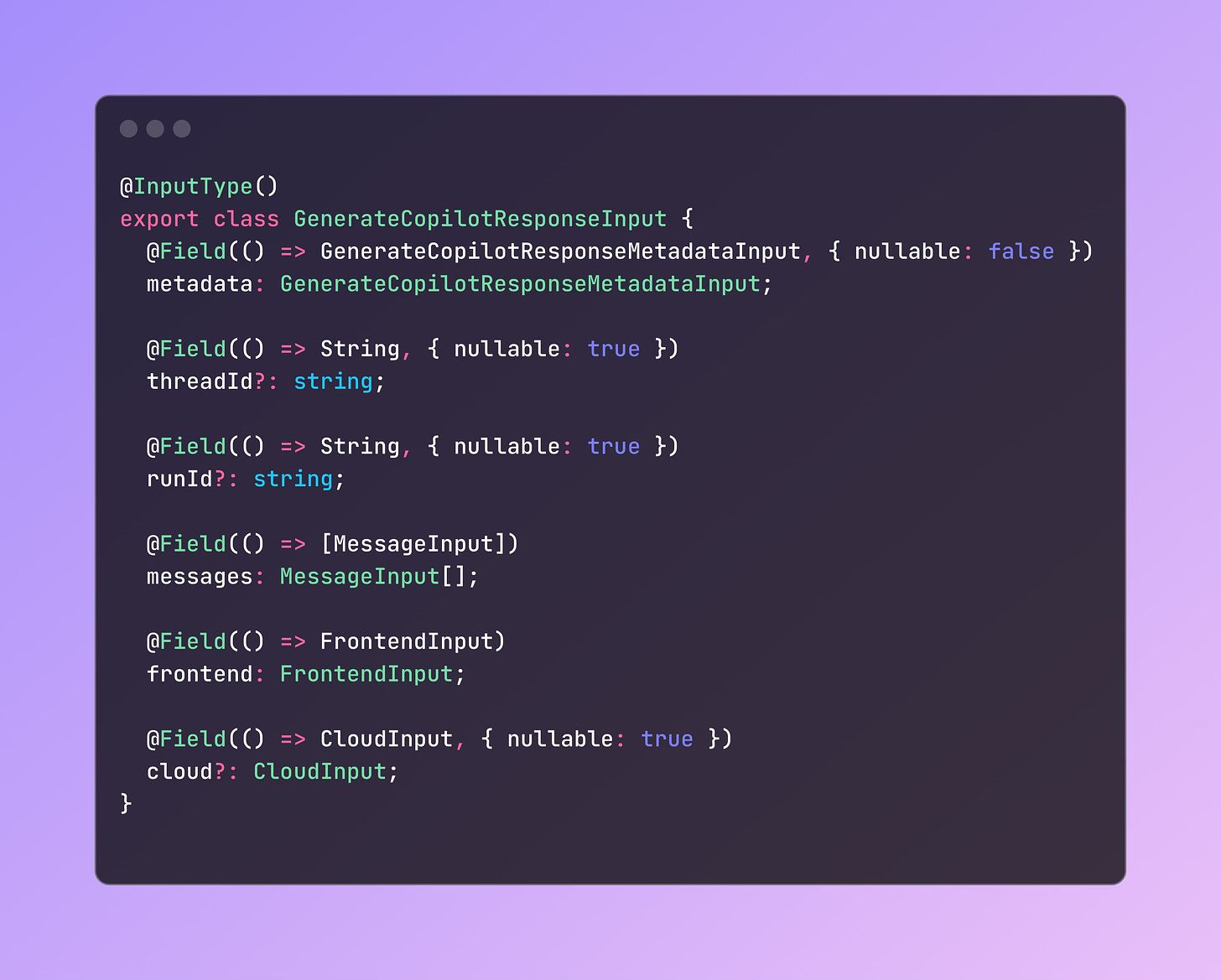

CopilotKit 1.0 delivers a major new experience built on a refined protocol and a clean abstraction layer between the app layer and the LLM engine: the Copilot Runtime. Utilizing GraphQL, this new structure is more robust, extensible, and ready to support innovation in copilot technologies.

In its first version, built for our own internal tools, the Copilot backend acted a simple proxy to an LLM REST API call. Today this runtime uses a dedicated GraphQL API to handle typed, dedicated input fields and returns copilot-specific typed dedicated, output fields - satisfying the diverse needs of a modern copilot system including the new features below.

Using GraphQL's @stream, each output field can stream data independently in parallel, crucial for real-time, user-facing LLM applications. This well-typed input and output structure supporting sophisticated streaming also simplifies contributions to our open-source framework.

Interested in contributing? Talk to us on the contribution channel in our Discord!

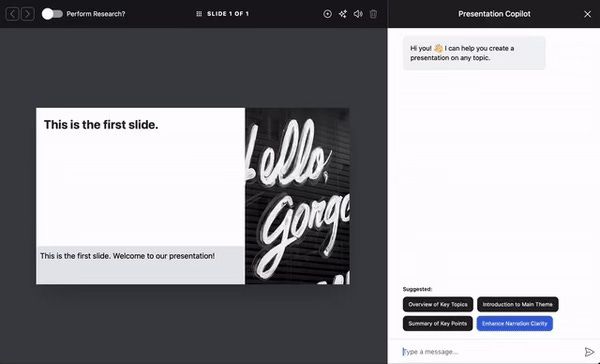

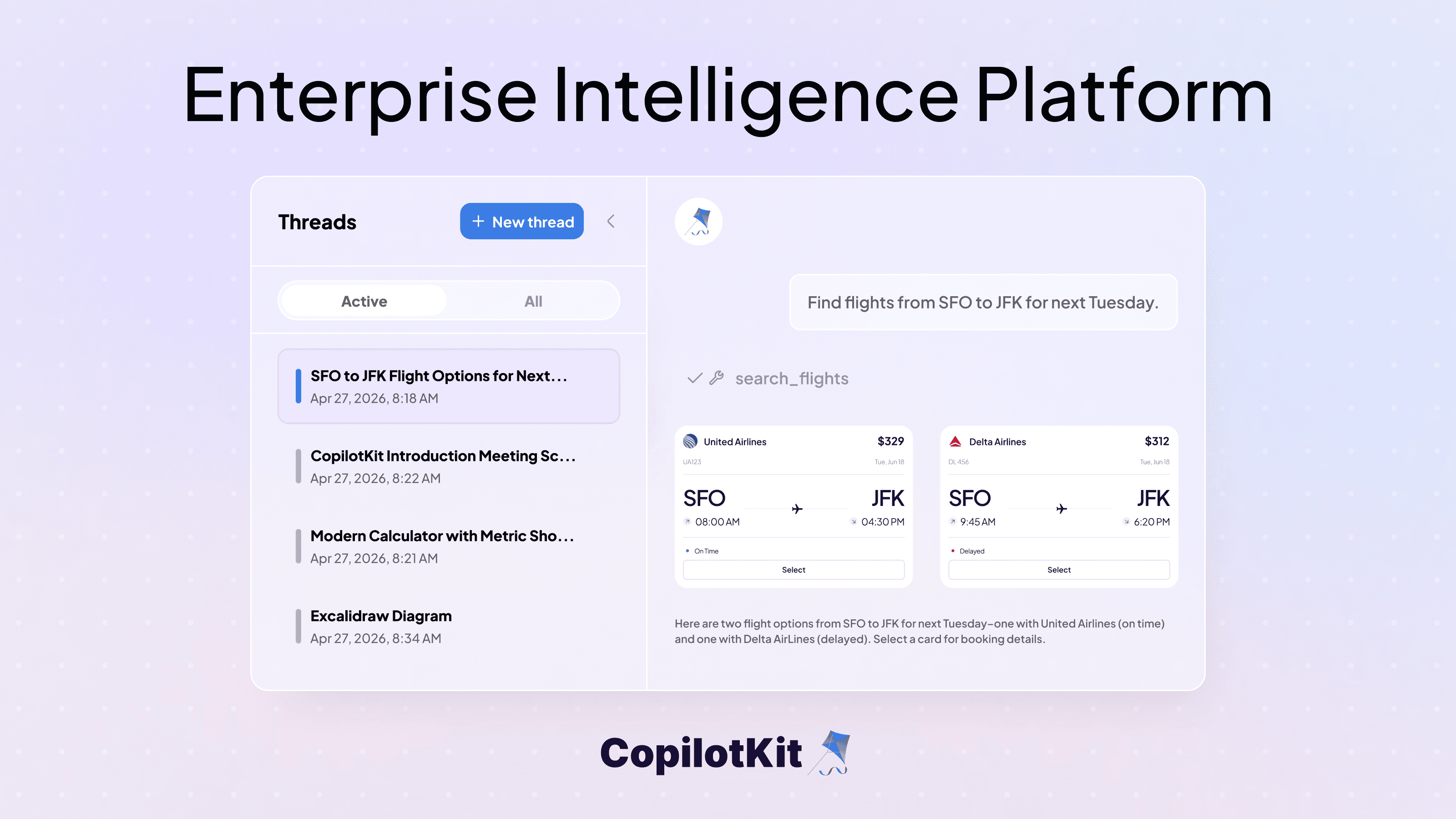

Copilot Cloud now extends the open-source CopilotKit with features for scale and enterprise requirements. The new managed Copilot Runtime enables one-click deployment, even on private clouds, and offers enterprise-ready functionalities requiring a stateful cloud environment.

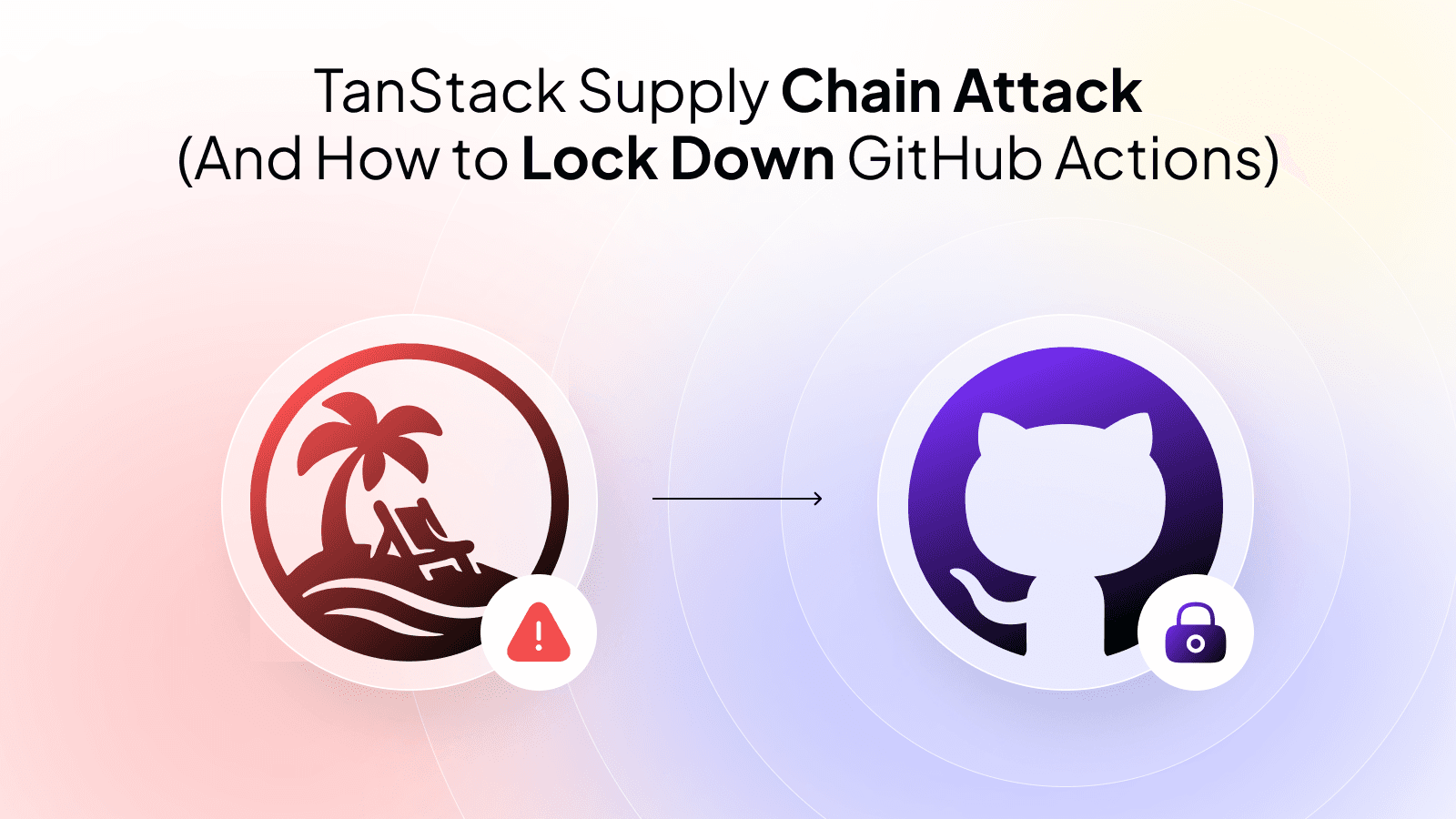

Beyond the deploy, you can now leverage Copilot Guardrails, a mission-critical tool to keep your copilot focused on the task at hand and maintain a controlled experience for your users. See how allowlists and denlists work here:

Copilot Cloud is in beta and accessible here. To get early access to upcoming beta features - like realtime RAG, chat histories and knowledge bases, and PII filtering - get in touch here.

{{CTA component}}

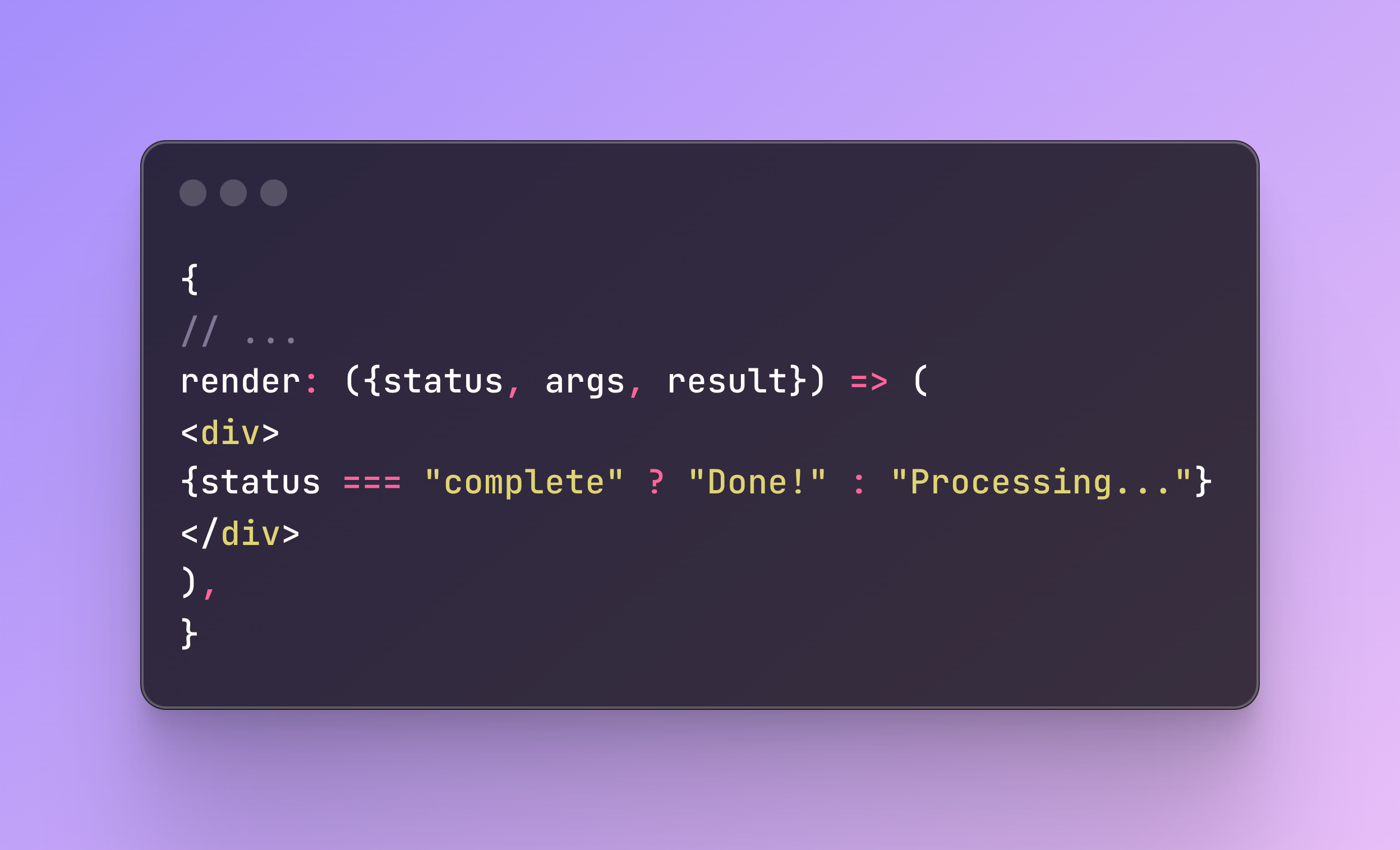

The AI copilot end user customer experience demands a level of visual engagement, making generative UI critical for developers. Now easily generate visual components that update in real time, from thumbnail sharing formats to dynamic messages triggered by user actions.

With [render], developers can return a React client-side component perfect for creating dynamic content that responds to user input and actions.

Take a look at the sample code to show “processing…” while the action is executing and “done” when complete:

Read the full docs here: https://docs.copilotkit.ai/reference/hooks/useCopilotAction#generative-ui

These latest CopilotKit hooks provide clean abstractions for customizing your Copilot’s behavior in powerful ways for realtime customer needs. Introducing Action, Readable, and Suggestions:

We can’t wait to hear what you think about the latest releases! The upcoming CopilotKit roadmap is full of exciting new features that we can’t help but give you a sneak preview. We highly value your input and want to hear from you in our weekly office hours sessions on discord (Thursdays at 10am PST).

Up next for CopilotKit users:

What’s in flight for your AI copilot? Get started today with v1.0 on our Github. Interested in being a part of the CopilotKit Cloud beta? Sign up here.

Subscribe to our blog and get updates on CopilotKit in your inbox.