CopilotKit raises Series ASeries A, read the announcement announced

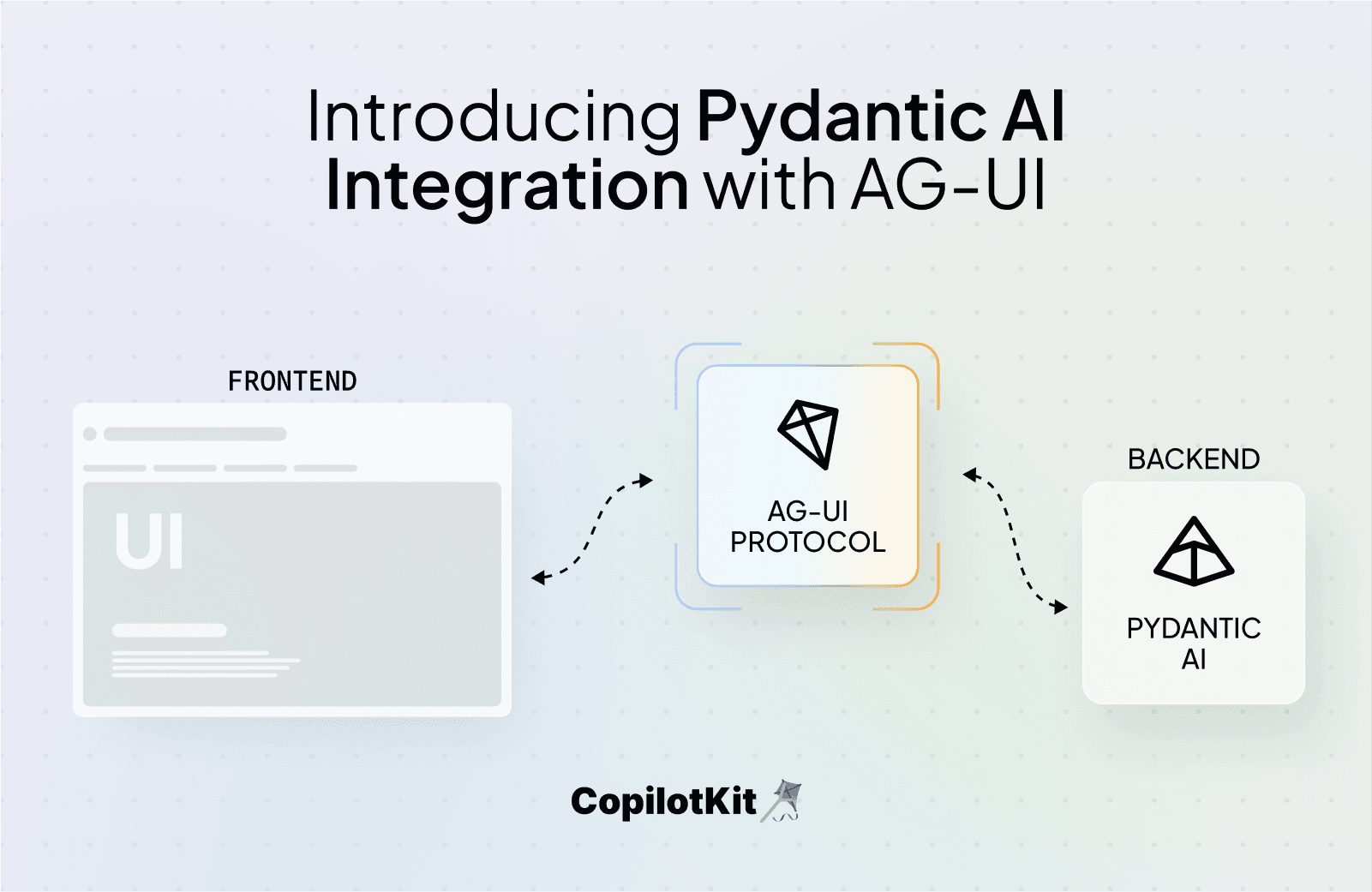

We’re excited to share that AG‑UI, the open Agent–User Interaction Protocol from CopilotKit, is now natively supported in Pydantic AI, thanks to a new integration built by Steven Hartland at Rocket Science, working hand in hand with the Pydantic AI team.

Pydantic AI is quickly becoming the standard for building reliable, type-safe AI agents in Python. But while the backend agent stack was solid, this integrations now enables first-class UI that improves the users experience:

That’s exactly what AG‑UI is designed for. It’s a composable protocol that connects AI agents to rich, interactive frontends, with minimal glue and strong separation of concerns.

"We originally began the AG‑UI integration as a standalone library, but with support from the Pydantic AI team, it became clear that this functionality belonged in the framework itself.”

"We collaborated closely with the Pydantic AI team to build in native support for AG‑UI, and shipped it alongside their new toolset abstraction."

The result is a seamless dev experience:

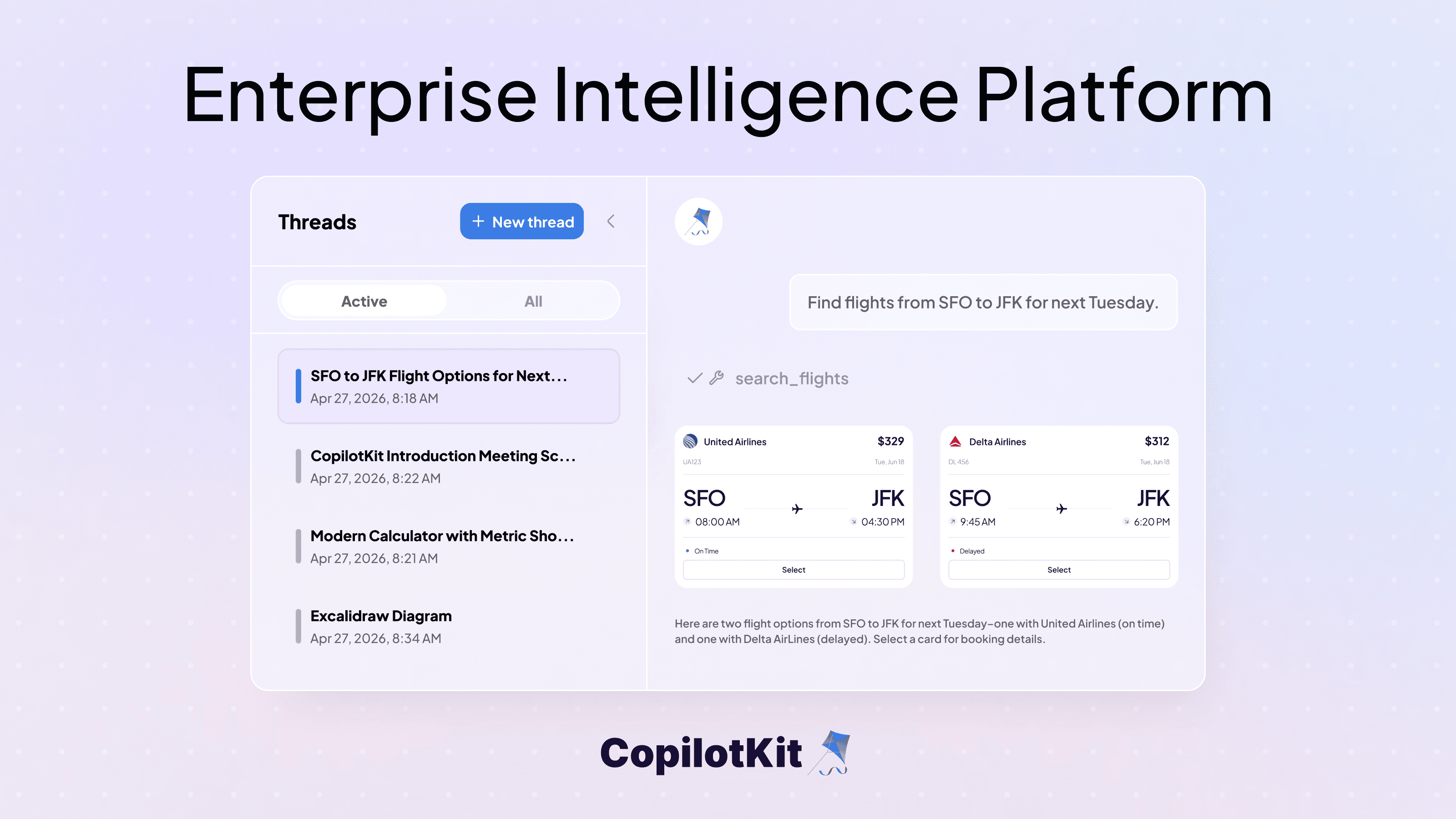

AG‑UI now works out-of-the-box with Pydantic AI - meaning developers can instantly add structured agent interaction to any AG‑UI-compatible frontend.

Rocket Science is very close to using this integration in production, and they’re helping drive AG‑UI forward as an open protocol for building real-time agentic systems.

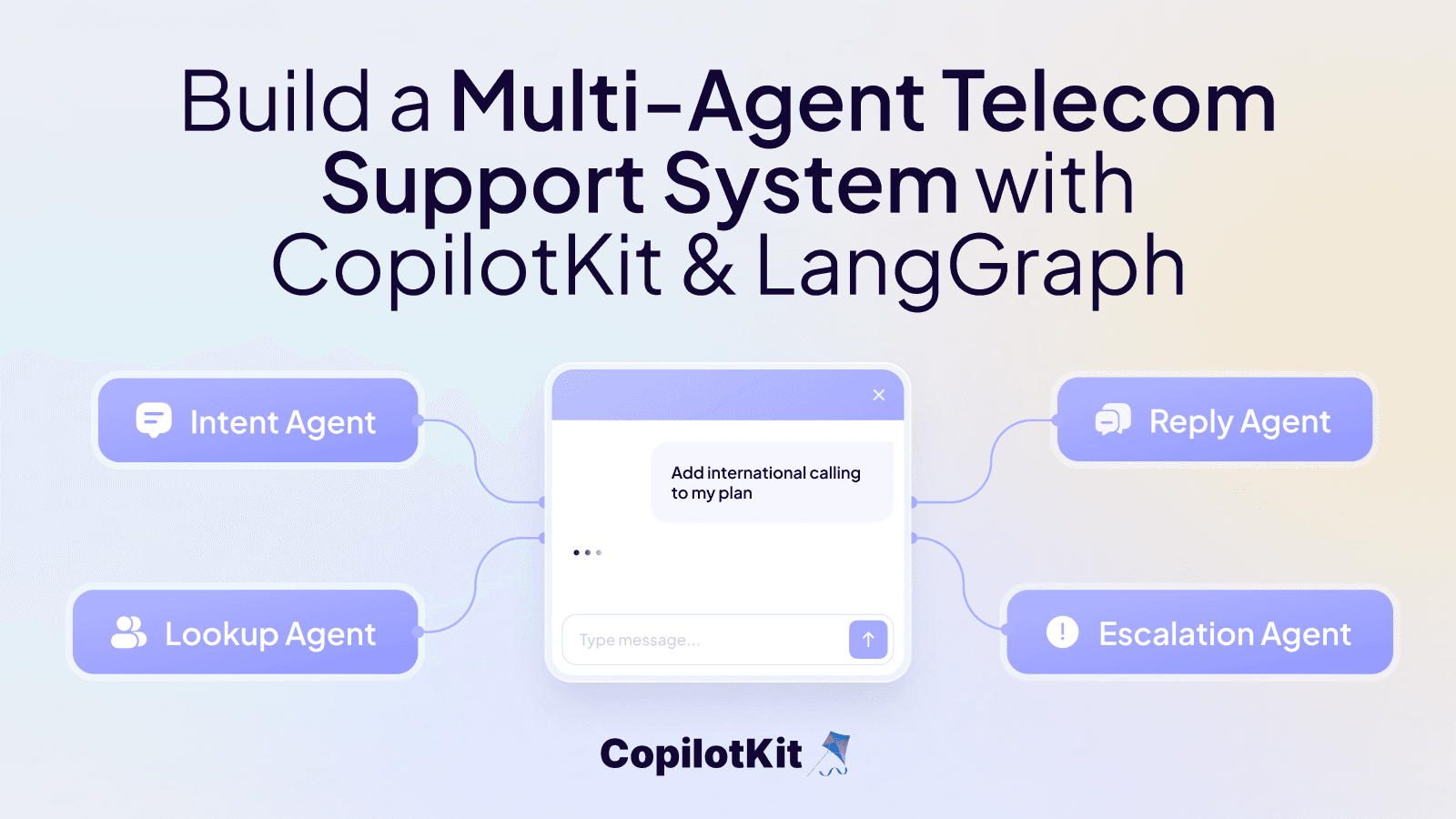

We’re collaborating closely through the AG‑UI working group, and more patterns, docs, and examples are coming soon, especially around structured editing, tool chaining, and UX for error recovery.

If you're building AI agents in Python and need a clean bridge between backend logic and interactive UI, this integration is built for you.

👉 Try it out: https://ai.pydantic.dev/examples/ag-ui/

👉 Check out the docs: https://ai.pydantic.dev/ag-ui/

Stay up to date and follow @CopilotKit, Pydantic AI, and @rocketsciencegg.

Subscribe to our blog and get updates on CopilotKit in your inbox.